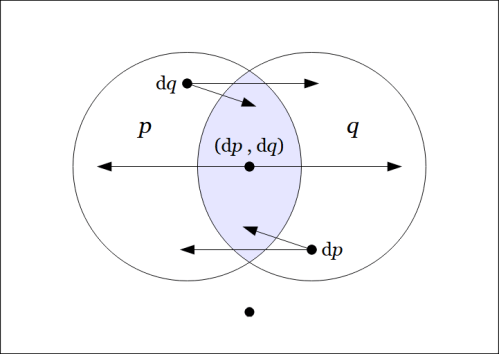

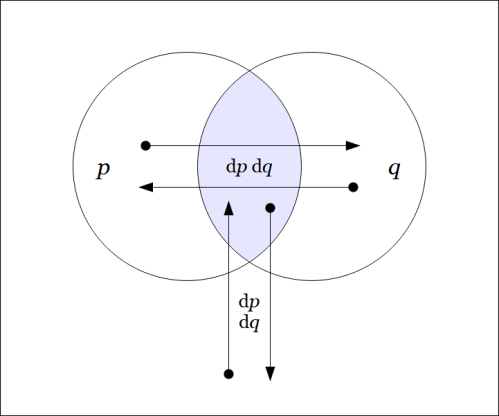

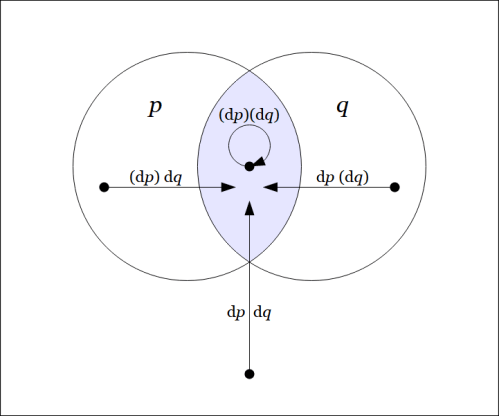

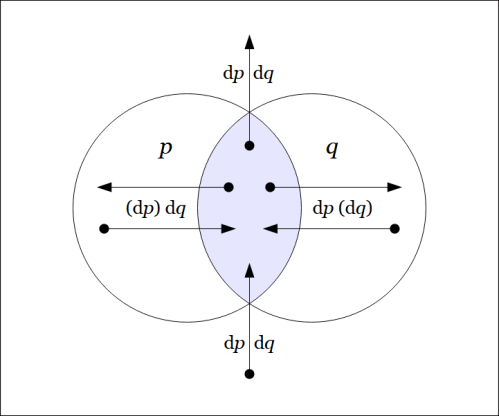

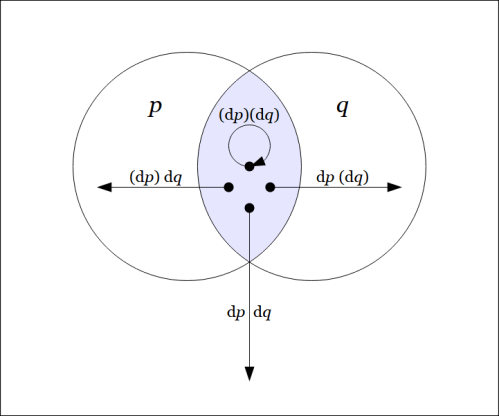

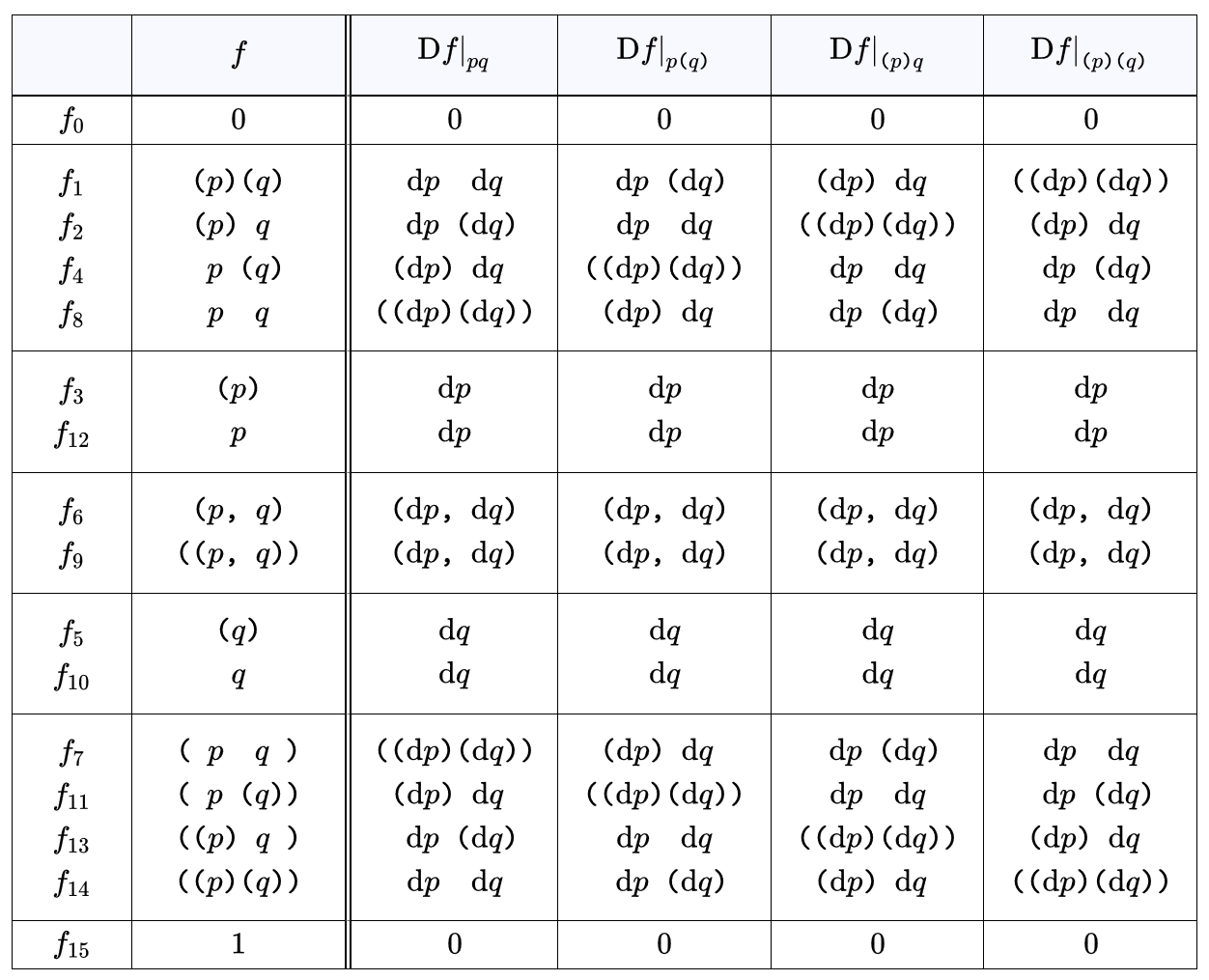

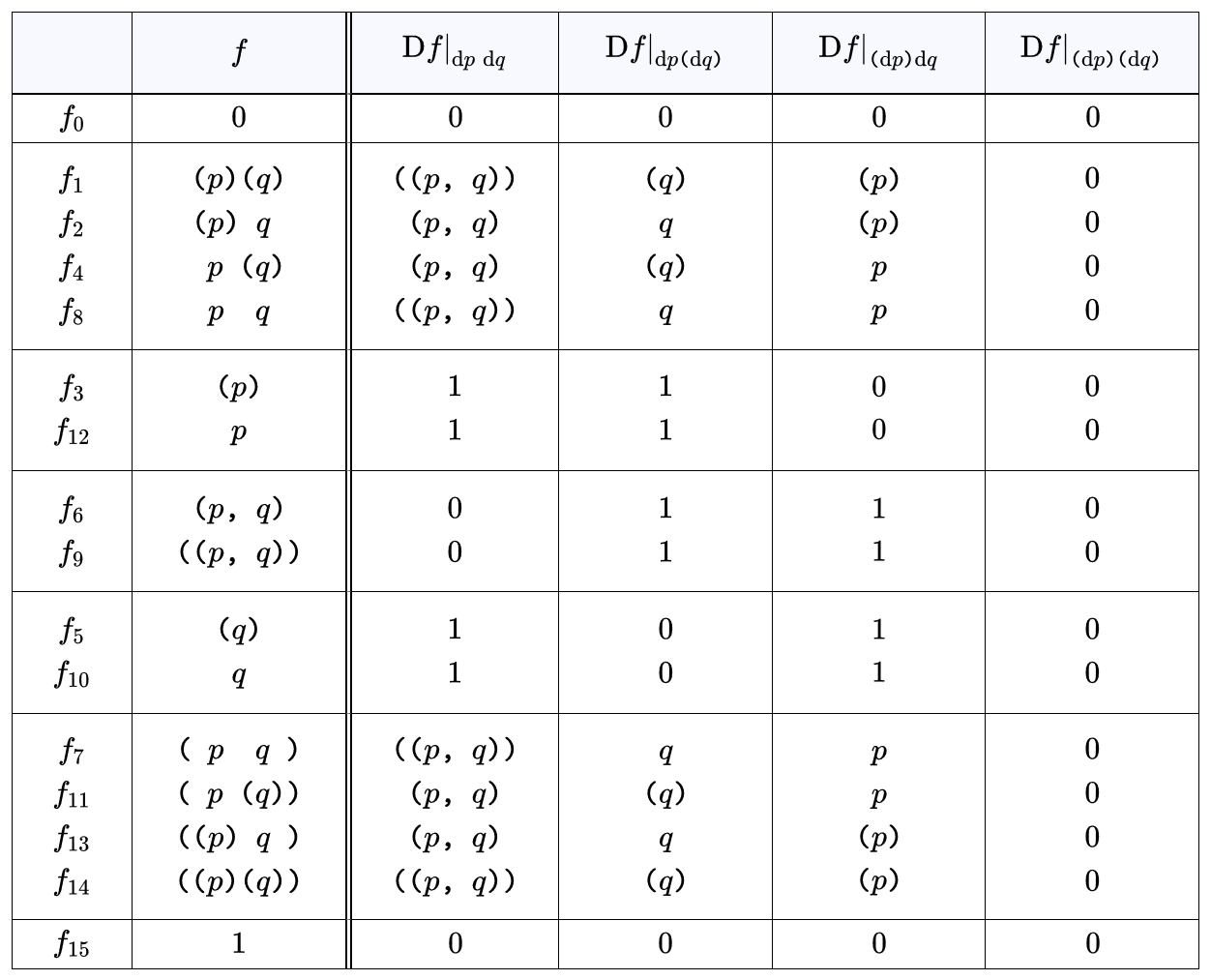

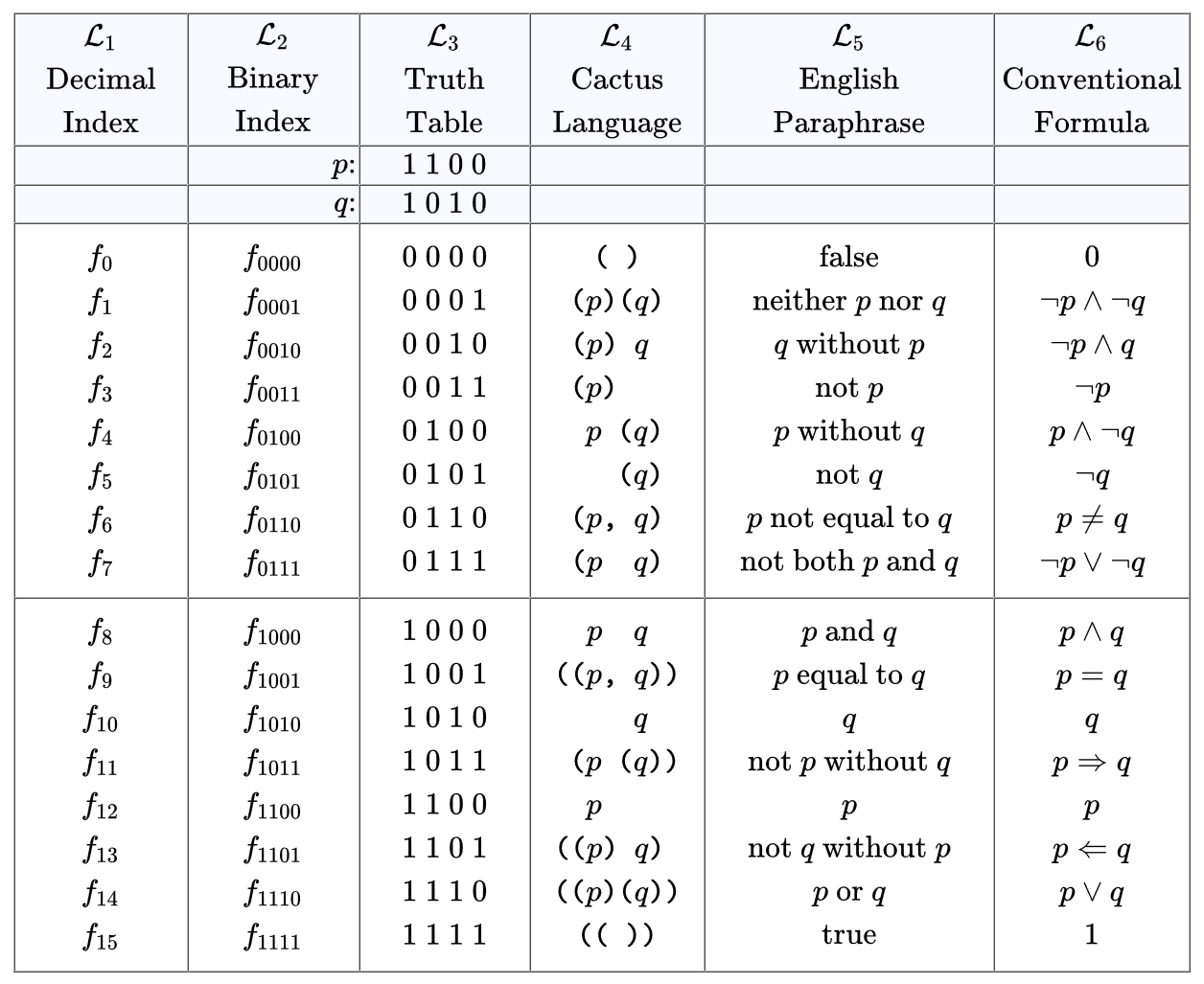

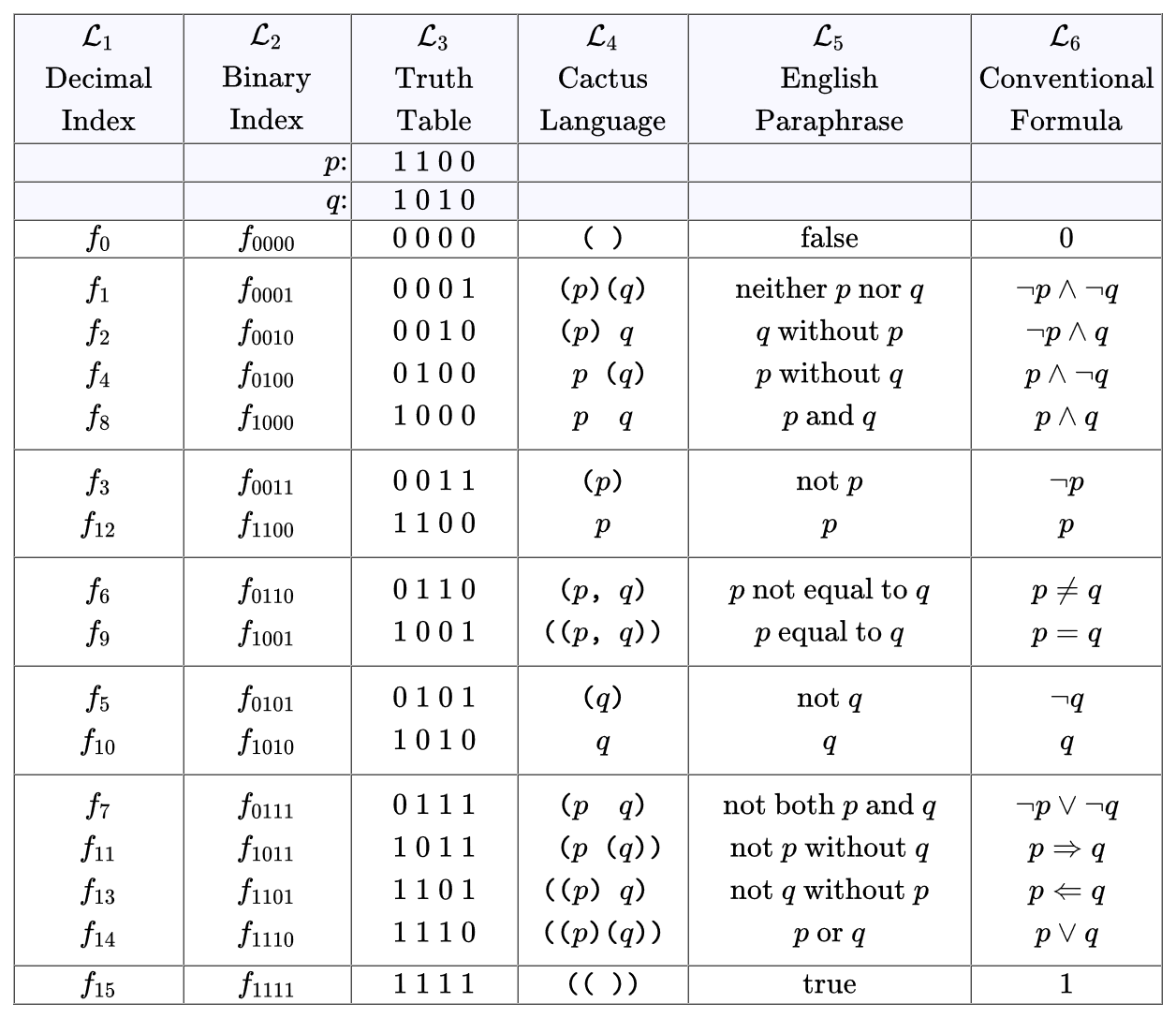

Differential logic is the logic of variation — the logic of change and difference.

Differential logic is the component of logic whose object is the description of variation — the aspects of change, difference, distribution, and diversity — in universes of discourse subject to logical description. A definition as broad as that naturally incorporates any study of variation by way of mathematical models, but differential logic is especially charged with the qualitative aspects of variation pervading or preceding quantitative models.

To the extent a logical inquiry makes use of a formal system, its differential component treats the use of a differential logical calculus — a formal system with the expressive capacity to describe change and diversity in logical universes of discourse.

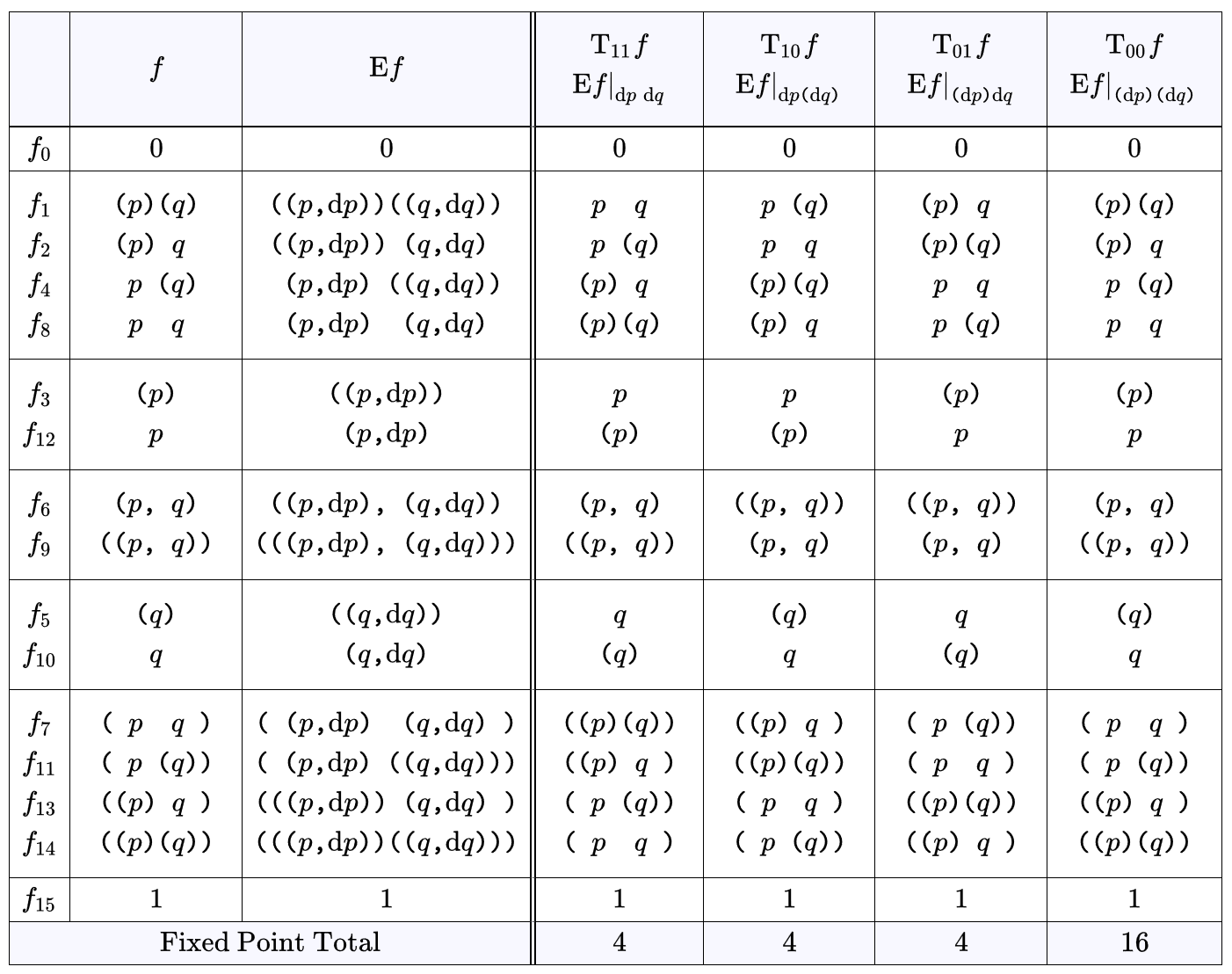

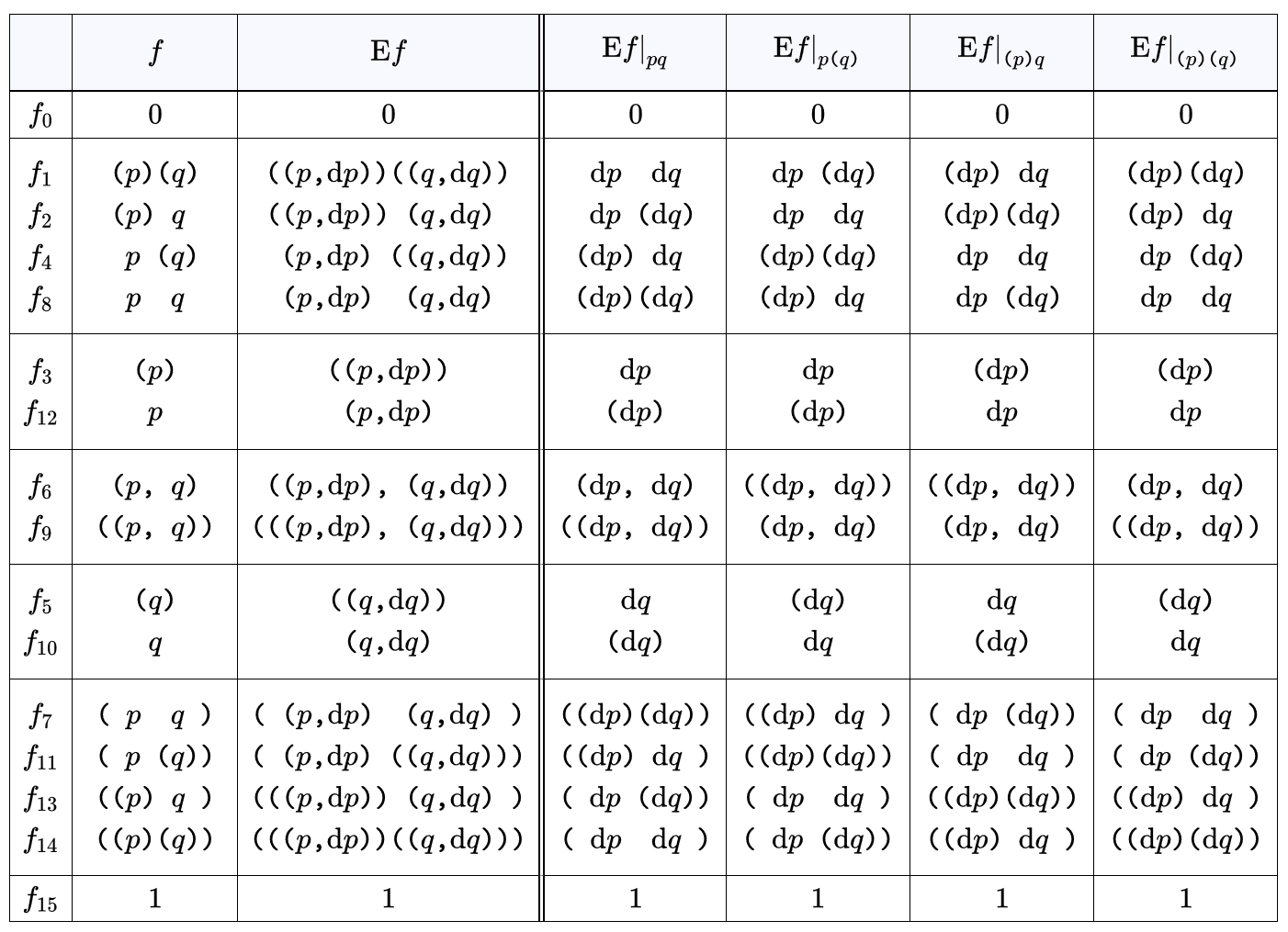

A simple case of a differential logical calculus is furnished by a differential propositional calculus, a formalism which augments ordinary propositional calculus in the same way the differential calculus of Leibniz and Newton augments the analytic geometry of Descartes.

Resources

- Logic Syllabus

- Survey of Differential Logic

- Differential Logic • Part 1 • Part 2 • Part 3

- Differential Propositional Calculus • Part 1 • Part 2

- Differential Logic and Dynamic Systems • Part 1 • Part 2 • Part 3 • Part 4 • Part 5

cc: Academia.edu • Cybernetics • Laws of Form • Mathstodon

cc: Research Gate • Structural Modeling • Systems Science • Syscoi